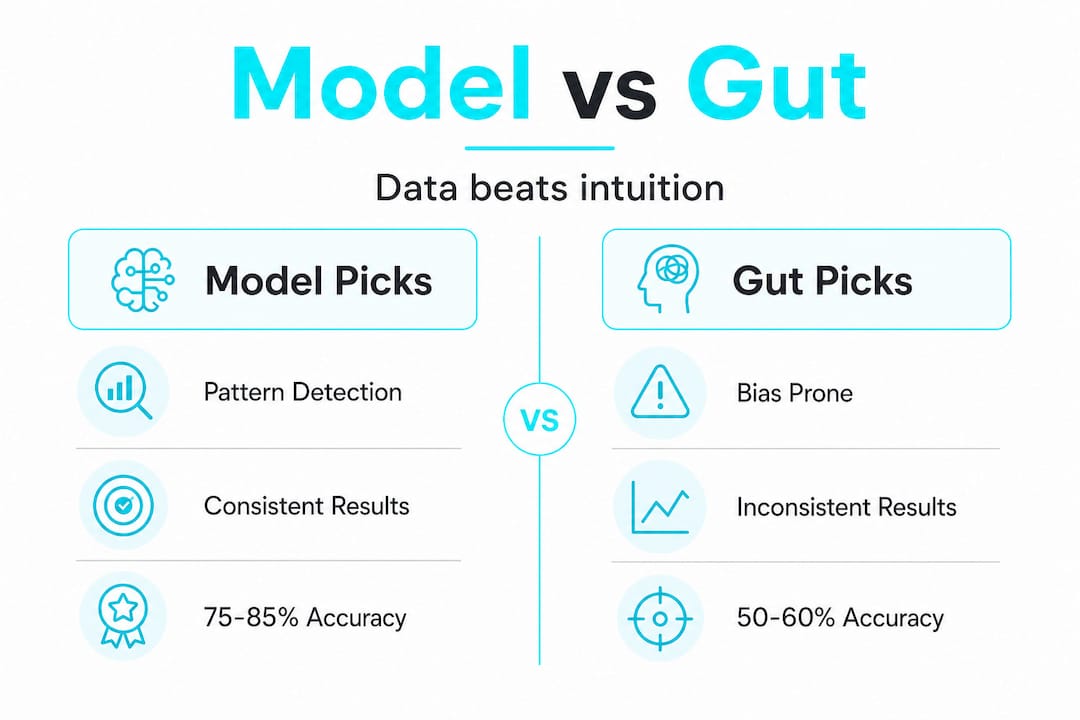

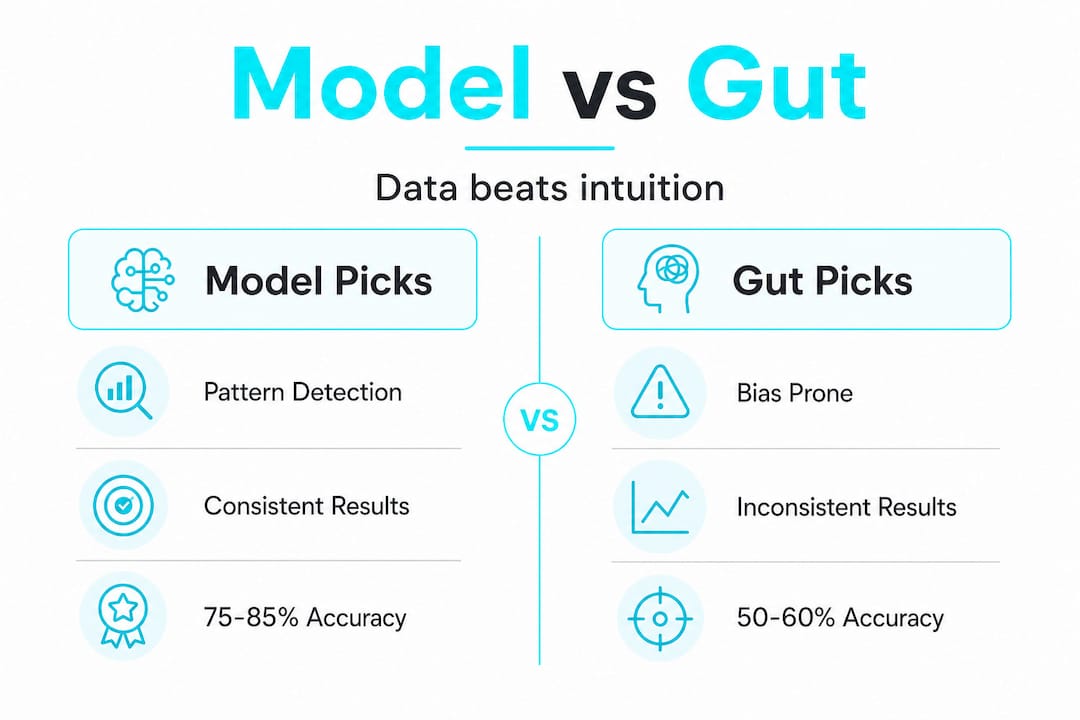

Most bettors swear their gut has a gift. They replay last week’s winning call, trust their “read” on a matchup, and bet with confidence rooted in feeling rather than fact. But here’s what the research actually shows: machine learning models hit 75-85% accuracy on game winners, while the average gut-driven bettor lands somewhere between 50-60%. That gap is not a rounding error. It is the difference between grinding losses and building a real edge over time. This article breaks down exactly why betting models win, where intuition still matters, and how you can start using data-driven picks to improve your results.

Table of Contents

-

How betting models process data and identify hidden patterns

-

Turning model insights into winning bets: Your practical roadmap

-

The uncomfortable truth: Why most bettors misuse models (and how to fix it)

Key Takeaways

| Point | Details |

|---|---|

| Models boost accuracy | Betting models consistently outperform gut picks with up to 85% accuracy. |

| Gut picks are biased | Intuition suffers from cognitive biases, leading to poor betting decisions. |

| Data-driven wins | Applying model insights increases your win rate by 10-20%. |

| Balance model and intuition | Use disciplined judgment alongside models for best results in challenging scenarios. |

| Pro books set the benchmark | Professional sportsbooks leverage sharp models, making disciplined quantitative play essential for beating markets. |

How betting models process data and identify hidden patterns

The first thing to understand is that a betting model does not just “look at stats.” It processes enormous volumes of information simultaneously, across multiple data streams, and without emotional noise clouding the output.

A well-built model pulls from sources like:

-

Play-by-play performance data across hundreds of games

-

Injury reports and lineup changes updated in near real time

-

Weather conditions for outdoor sports like NFL or MLB

-

Betting splits and line movement that reveal sharp money behavior

-

Rest and travel schedules that affect player fatigue and performance

-

Historical head-to-head matchup data going back multiple seasons

The reason this matters is not just volume. It is objectivity. A model does not care that your favorite team won three straight. It does not panic after a bad beat. It identifies hidden patterns that human analysts routinely miss, including subtle statistical regularities buried inside thousands of data points that no human brain could hold and process at once.

For example, a model tracking NBA player props might notice that a specific point guard consistently underperforms his assist average when playing back-to-back games on the road against zone defenses. That is a five-variable pattern. Your gut is not catching that. The model is.

You can explore benchmarks for model-driven betting to see how these patterns translate into real-world accuracy. The difference between a model-informed pick and a casual gut pick is not luck. It is systematic information processing done at a scale humans simply cannot replicate manually.

Accuracy vs. intuition: The numbers behind the edge

Let’s put hard numbers on the table. The accuracy gap between model-driven betting and gut picks is measurable, documented, and significant.

| Approach | Accuracy range | Consistency |

|---|---|---|

| ML/ensemble models | 75-85% | High, systematic |

| Human gut picks | 50-60% | Variable, emotional |

| Single-stat models | 60-68% | Moderate |

| NBA stacked ensemble | 73.60% | High, validated |

The data is clear. ML and ensemble methods consistently outperform human intuition when predicting game winners. And it is not just theoretical. A stacked ensemble model tested on NBA games achieved 73.60% test accuracy, outperforming every single-model approach in the study.

“A 15-percentage-point accuracy advantage over gut picks does not just feel better. Over 100 bets, it is the difference between a profitable season and a losing one.”

Think about what a 10-15% accuracy improvement actually means in practice. If you place 200 bets per season at even odds, moving from 52% accuracy to 67% accuracy means roughly 30 additional winning bets. At $50 per bet, that is $1,500 in added profit from the same number of plays, just from better information processing.

That is the edge that model-driven betting creates. Not magic. Not luck. Just math applied consistently over time.

Psychology of gut picks: Why intuition fails

Here is the uncomfortable part. Your gut is not neutral. It is actively working against you in ways you probably do not notice.

Cognitive science has documented a range of mental shortcuts, called cognitive biases, that distort human judgment in high-stakes decisions. Betting is one of the worst environments for these biases to run unchecked. Research confirms that gut picks suffer from biases like overconfidence and loss aversion, leading to decisions that feel right but consistently underperform.

Here are the most damaging biases for sports bettors:

-

Overconfidence bias: You overestimate how much your sports knowledge translates to predictive accuracy. Knowing a team’s roster does not mean you can predict their next cover.

-

Recency bias: You weight the last few games too heavily. A team that won three straight feels like a lock, even if their underlying metrics are weak.

-

Loss aversion: After a losing streak, you either chase losses with bigger bets or become overly conservative, both of which destroy bankroll management.

-

Confirmation bias: You seek out information that supports your pick and ignore data that contradicts it.

-

Narrative fallacy: You build a story around a matchup (“this team always shows up in big games”) that sounds compelling but has no statistical basis.

Models do not have any of these problems. A model does not feel the sting of last week’s bad beat. It does not get excited about a team’s playoff run. The edge is real precisely because models stay consistent regardless of what happened yesterday.

Pro Tip: Before placing any gut-driven bet, ask yourself: “Am I picking this because the data supports it, or because I want it to be true?” If you cannot answer that question honestly, trust the model.

When intuition matters: Model limits and expert nuance

To be fair, models are not infallible. Understanding where they break down is just as important as understanding where they excel.

Here are the key situations where human judgment can still add value:

-

Thin betting markets. Models need data to function. In niche markets, like minor league props or obscure international leagues, there simply is not enough historical data to build reliable predictions. Sharp intuition from someone who watches those games closely can outperform a data-starved model.

-

Non-stationary environments. When a team undergoes sudden change, a new coach, a locker room controversy, or a star player’s personal crisis, models trained on historical patterns may not adapt quickly enough. Human observers who follow teams closely can catch these shifts faster.

-

Black swan events. A model trained on normal game conditions cannot anticipate a stadium power outage, a sudden weather shift, or a player playing through an undisclosed injury. These black swan events are exactly where rigid model outputs can mislead.

-

Player behavioral tendencies. Some patterns are qualitative. A veteran quarterback who consistently elevates in playoff games, or a player who historically underperforms against a specific defender, may not show up clearly in aggregate stats but are visible to expert observers.

-

Overfitting risk. A model that is too finely tuned to historical data can find patterns that look real but are just noise. This is called overfitting, and it leads to confident predictions that fall apart in live conditions.

Pro Tip: Use models as your primary decision engine, but keep a short list of “context flags” that you monitor manually. If a context flag fires, like a sudden lineup change or a locker room story, give it weight even if the model has not adjusted yet.

It is also worth noting that sharp sportsbooks like Pinnacle run their own real-time AI models, making it extremely difficult for amateur models to find consistent edges against their lines. Knowing which books to target matters as much as the model itself.

Turning model insights into winning bets: Your practical roadmap

Understanding models is one thing. Using them to actually improve your betting results is another. Here is a practical framework for making model-driven betting work in your day-to-day process.

| Step | Action | Why it matters |

|---|---|---|

| 1 | Choose a model with documented accuracy | Baseline credibility |

| 2 | Track closing line value (CLV) on every bet | Measures real edge |

| 3 | Set a flat betting unit (1-3% of bankroll) | Protects against variance |

| 4 | Log every bet with model output vs. actual result | Identifies drift |

| 5 | Review model performance monthly | Catches degradation early |

AI-powered betting increases win rates by 10-20% over gut picks for users who apply it consistently and with discipline. That number is not automatic. It requires you to actually follow the model output rather than overriding it every time your gut disagrees.

Here is what disciplined model use actually looks like in practice:

-

Do not cherry-pick. If you only take model picks when they match your gut, you are not using the model. You are using your gut with extra steps.

-

Track your overrides. Every time you go against the model, log it. After 30 days, compare your override win rate to your model-following win rate. Most bettors are shocked by what they find.

-

Use props, not just game lines. Player props are where model-driven betting insights create the most exploitable edges, because the market is less efficient than game totals or spreads.

-

Respect sample size. A model that is 60% accurate over 20 bets might just be running hot. Wait for 100+ bets before drawing conclusions about edge.

Research from KellyBench empirical benchmarks confirms that disciplined quantitative approaches outperform even advanced models when applied without emotional interference. The discipline is the edge, not just the algorithm.

The uncomfortable truth: Why most bettors misuse models (and how to fix it)

Here is something most betting content will not tell you: having access to a model is not enough. The majority of bettors who use models still lose, and it is not because the model is wrong. It is because they misuse it in predictable ways.

The biggest mistake is treating model accuracy as the only metric that matters. Accuracy is important, but it is not the full picture. What actually determines long-term profitability is closing line value, or CLV. CLV measures whether you consistently bet at better odds than where the line closes. If you are regularly beating the closing line, you are on the right side of the market, even during losing streaks. If you are not, even a high-accuracy model will not save your bankroll.

Most casual bettors obsess over win rate and ignore CLV entirely. That is a critical error.

, and the reason is not that the predictions are wrong. It is that by the time the average bettor acts on a model output, the sharp money has already moved the line. The value is gone.The fix is timing and line shopping. Act on model outputs early, before lines move. Use multiple books to find the best number. And track CLV religiously, because it is the single most honest signal of whether your approach has a real edge.

Another common misuse is over-relying on a single model for all sports and all bet types. A model built for NBA game totals is not automatically reliable for NFL player props. Each market has different data structures, different efficiencies, and different levels of competition. Applying model-driven betting benchmarks appropriately means knowing which model fits which market.

The bettors who actually win long-term are not the ones with the fanciest algorithm. They are the ones who combine solid model outputs with disciplined bankroll management, sharp line shopping, and honest self-assessment of when they are adding value versus just adding noise.

Get your edge with Atlas Sports AI today

You have seen the data, the psychology, and the practical framework. Now the question is: where do you actually get model-driven picks that are built to exploit real market inefficiencies?

Atlas Sports AI brings together proven AI modeling with a focus on player props, the most inefficient and exploitable segment of the sports betting market. Instead of guessing on game lines where sharp books have already priced out the edge, Atlas Sports AI targets the spots where data-driven models create a measurable advantage. Whether you are just starting to move beyond gut picks or you are already tracking CLV and want sharper inputs, access Atlas Sports AI and put the numbers to work for your next bet. Stop guessing. Start modeling.

Frequently asked questions

How much more accurate are betting models compared to gut picks?

Betting models using ML and ensemble methods achieve 75-85% accuracy on game winners, compared to 50-60% for typical gut picks, a gap that compounds significantly over a full betting season.

Do models always beat human intuition in sports betting?

Models dominate in data-rich sports like NBA and NFL, but thin markets and non-stationary environments are exceptions where human intuition and contextual knowledge can still add meaningful value.

Can a disciplined bettor use models and intuition together?

Yes, and the best approach combines model outputs as the primary signal with human judgment reserved for context flags like sudden lineup changes, since models can miss black swan events that an informed observer catches quickly.

Why do some advanced models fail to beat the sportsbook?

Pro books run real-time AI models that adjust lines faster than most amateur models can react, meaning the edge disappears before the bet is placed. Beating the market requires acting early and tracking closing line value, not just accuracy.